Ai Contextual Governance Business Evolution Adaptation

Your AI models are live in production. Your compliance team thinks governance is handled. But somewhere between the policy binders and the deployment dashboards, something critical is missing—and it’s costing companies millions in misaligned decisions, regulatory exposure, and strategic drift.

That gap has a name: contextual intelligence. And filling it is what separates organizations that scale AI responsibly from those that get burned by it.

AI contextual governance isn’t another compliance checkbox. It’s the operating layer that ensures your AI systems remain accurate, aligned, and adaptable as your business evolves—not just when models are first deployed, but continuously, across every use case, regulatory environment, and market shift that follows.

This guide breaks down exactly how ai contextual governance business evolution adaptation, why static frameworks fail, and how leading organizations are using it to drive business evolution without breaking what’s already working.

What Is Ai Contextual Governance Business Evolution Adaptation

? (And Why the Standard Definition Misses the Point)

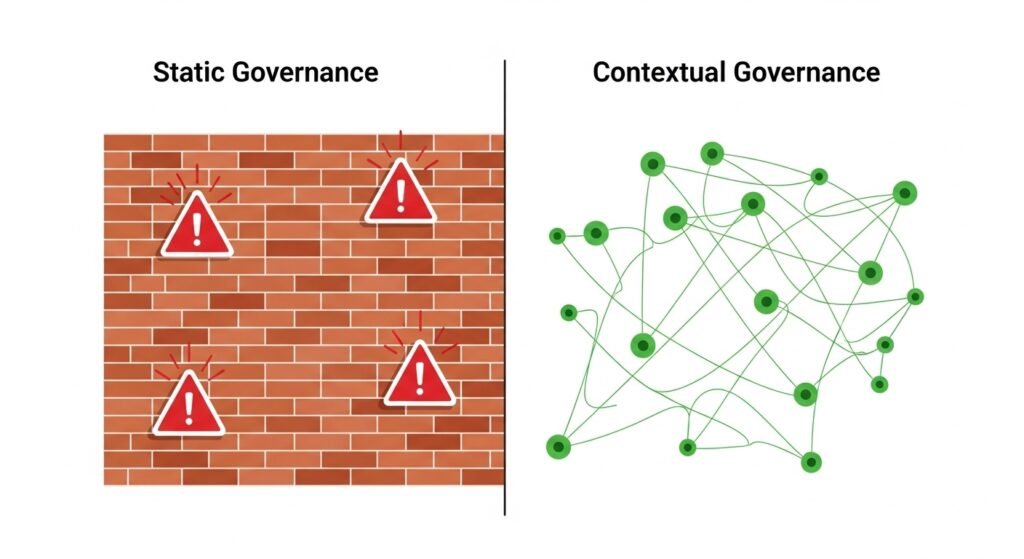

Most governance frameworks treat AI like traditional software: define the rules upfront, audit periodically, update when something breaks. That approach made sense for deterministic systems. It fails completely for AI.

Unlike rule-based software, AI systems are probabilistic and adaptive. A language model doesn’t follow instructions—it infers them. A predictive model doesn’t execute logic—it approximates it. And as the business context around those models changes—new regulations, shifting customer behaviors, different market conditions—the outputs drift away from what was originally intended, often without triggering any formal alert.

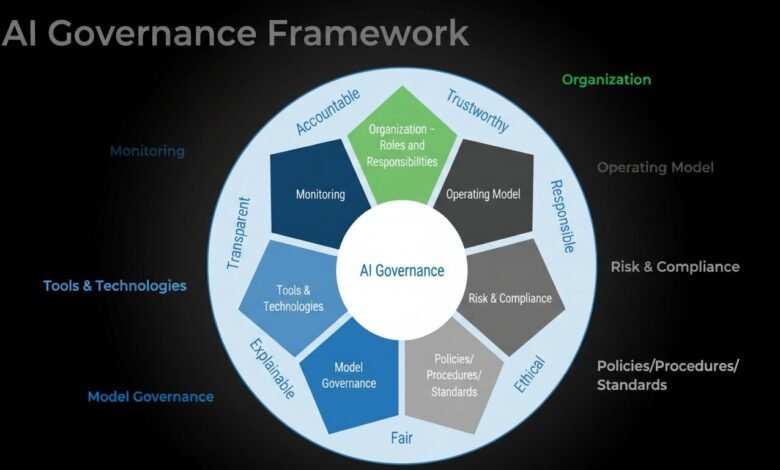

AI contextual governance is the framework that addresses this problem directly. Instead of applying blanket policies to all AI systems, it calibrates oversight dynamically, matching governance controls to each system’s actual use case, risk profile, regulatory exposure, and decision impact.

A customer-facing chatbot handling product inquiries operates under fundamentally different constraints than a credit scoring model affecting loan approvals or a clinical diagnostic tool influencing patient care. Contextual governance treats them differently—not arbitrarily, but systematically, based on measurable risk tiers, data sensitivity classifications, and accountability structures appropriate to each deployment.

The core mechanism is contextual awareness. Governance policies aren’t static documents—they’re living rule sets that respond to real-time signals: who is using the system, under what regulatory jurisdiction, with what data, for what decision purpose, and at what level of business impact.

Why Static AI Governance Fails Businesses That Are Evolving

Consider a mid-sized retail company that deploys a recommendation engine trained on pre-pandemic shopping data. Technically, the model performs well—high precision, strong recall scores. But as consumer behavior shifts post-COVID, those metrics mask a growing problem: the model is optimizing for a customer that no longer exists.

The governance team checks compliance boxes. The data scientists monitor accuracy metrics. No one is asking whether the AI is still solving the right business problem. That’s a contextual governance failure.

The same pattern plays out across industries:

- A regional credit union deploys an AI scoring model calibrated to a specific interest rate environment. Rates change. Risk profiles shift. The model keeps scoring—accurately by its own metrics—while systematically underwriting risk that the business would never accept if humans were reviewing it manually.

- A logistics provider uses an AI routing system trained to minimize delivery time. Leadership shifts priorities toward cost reduction. The model keeps optimizing for speed while the business needs efficiency. The governance layer never registers the strategic misalignment.

- A consumer electronics brand runs AI-driven email personalization trained on broad audience data. The company repositions as a premium brand. The AI keeps targeting mass-market segments, running campaigns that actively undermine the brand identity the marketing team is trying to build.

In each case, the AI is technically functional. Governance audits would pass. But the systems are failing the business because governance isn’t connected to evolving business context.

This is the fundamental problem that AI contextual governance solves: not just “is the model accurate?” but “is it accurate for our current business reality?”

The Architecture of AI Contextual Governance

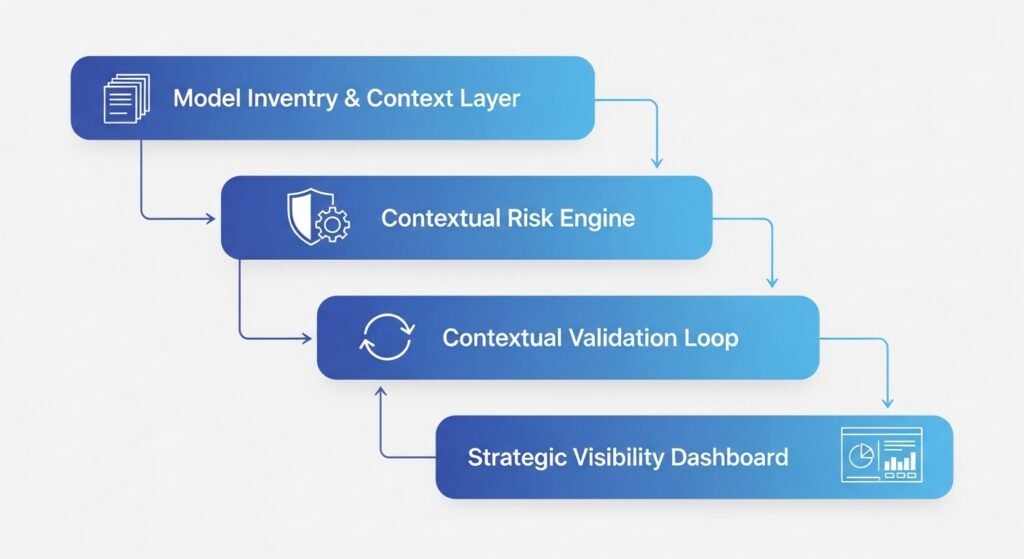

Effective contextual governance operates across four interconnected layers. Organizations that implement all four maintain alignment between AI behavior and business intent across the full deployment lifecycle.

1. The Model Inventory and Context Layer

Before you can govern AI contextually, you need to know what you have and what context each system operates in. This goes far beyond a spreadsheet of model names.

A complete AI inventory captures contextual metadata for every deployment: the base model used, any fine-tuning or proprietary training data applied, the business function it serves (HR, Finance, Legal, Engineering), the impact category (content generation, employment decision, critical infrastructure, biometric identification), data classification (public, internal, confidential, restricted, PII, PHI), and the current autonomous authority level of the system.

This inventory layer is the foundation. Without it, contextual governance is impossible—you can’t calibrate oversight to context you haven’t captured.

2. The Contextual Risk Engine

Once the inventory exists, a contextual risk engine assigns risk tiers dynamically rather than statically. Low-risk systems—internal chatbots summarizing documents, content drafting tools—flow through fast-track approval. High-risk systems—credit scoring, hiring algorithms, health AI—follow a slow lane with algorithmic impact assessments, external audits, and legal sign-off before deployment changes go live.

The EU AI Act formalizes a version of this approach, explicitly categorizing AI by risk level and assigning regulatory requirements accordingly. But effective contextual governance goes further: it applies dynamic risk scoring based on real-time context signals, not just categorical labels assigned at deployment.

A customer recommendation engine operating normally might score as medium risk. The same engine during a major brand repositioning, when its outputs are actively misaligned with strategic intent, should trigger elevated oversight. Contextual risk engines catch that. Static compliance frameworks don’t.

3. The Contextual Validation Loop

This is where most governance frameworks stop short—and where contextual governance delivers its most distinctive value.

Contextual validation loops continuously compare AI outputs against business-specific definitions of success, not just technical accuracy metrics. They answer a different question than model monitoring: not “is the model performing as trained?” but “is the model delivering the business outcome we need?”

The distinction matters enormously. A churn prediction model might achieve 87% accuracy predicting who leaves—technically impressive—while failing to identify the high-value customers whose retention actually drives revenue. Standard model monitoring misses this. Contextual validation catches it because it’s measuring against business-defined outcomes, not just statistical performance.

Effective validation loops require four components working together:

- Contextual definitions that translate business intent into measurable signals (customer lifetime value, not just transaction size; upgrade conversion, not just click rate)

- Real-time context monitoring that connects AI governance systems to business intelligence dashboards where strategic KPIs are tracked

- Automated drift detection that identifies when model behavior diverges from current business context—not just from its training baseline

- Escalation paths that route contextual anomalies to the right people: data scientists for technical issues, operational leaders for strategic misalignment, compliance teams for regulatory exposure

4. The Strategic Visibility Dashboard

Governance that isn’t visible to decision-makers isn’t governance—it’s documentation. The strategic layer of contextual governance translates model performance, risk profiles, and compliance status into business language accessible to executives and boards.

Rather than reporting on precision and recall scores, this layer surfaces business-relevant metrics: revenue impact of AI recommendations, customer satisfaction trends correlated with AI interactions, operational cost changes attributable to AI-driven decisions, and adaptation time when governance adjustments are made.

This visibility layer also serves a critical compliance function. As regulatory frameworks like the EU AI Act and GDPR require organizations to demonstrate not just that AI is governed, but that governance is effective and accountable, the strategic dashboard provides the auditable evidence trail that legal teams need.

How AI Contextual Governance Enables Business Evolution

The real competitive advantage of contextual governance isn’t risk reduction—it’s business agility. Organizations that govern AI contextually can adapt faster than those running static frameworks, because their governance infrastructure is designed to absorb change rather than resist it.

Adapting to Market Shifts Without Rebuilding Models

When market conditions change, organizations without contextual governance face a bad choice: live with misaligned AI or trigger expensive model retraining cycles. Contextual governance creates a third path—updating the contextual parameters and validation definitions that shape how models are applied, rather than rebuilding the models themselves.

A direct-to-consumer furniture company experiencing this challenge used contextual governance to address dynamic pricing misalignment without retraining their pricing AI. By updating the contextual validation logic to reflect new competitive data and margin targets, they restored strategic alignment within weeks rather than months.

Scaling Compliance Across Regulatory Environments

Global organizations face a compliance fragmentation problem: GDPR governs European data, HIPAA governs US healthcare data, the EU AI Act governs high-risk AI systems across the EU, and sector-specific regulations vary by jurisdiction. Managing this manually doesn’t scale.

Contextual governance automates regulatory alignment by encoding jurisdiction-specific rules into the policy orchestration layer. When an AI system processes data from a European user, GDPR controls activate automatically. When the same system processes health-related data, HIPAA constraints layer on. The system doesn’t need separate governance tracks for each regulatory regime—context triggers the appropriate controls.

Supporting Organizational Transformation

AI democratizes expertise in ways that fundamentally reshape organizational structure. Traditional hierarchies flatten as real-time AI analytics distribute insights that once required senior-level interpretation. New roles emerge—AI trainers, data ethicists, governance coordinators—whose function is explicitly to bridge technical AI capabilities and human organizational judgment.

The Human-AI Governance (HAIG) framework addresses this structural dimension explicitly, designing governance to flex as AI assumes greater decision authority in some domains while human oversight intensifies in others. The goal isn’t to fix the ratio of human to AI decision-making—it’s to ensure that ratio is calibrated appropriately to each context and adjusts as both AI capabilities and business needs evolve.

Real-World Applications: What Industry Leaders Are Actually Doing

The organizations extracting the most business value from AI aren’t the ones with the most sophisticated models—they’re the ones with the governance infrastructure that lets those models stay aligned with evolving business reality.

Walmart has deployed AI across its logistics operations with governance frameworks that connect routing optimization to real-time cost and service level targets. When strategic priorities shift, governance parameters update to reflect new optimization objectives rather than requiring model replacement.

BMW operates AI-powered quality control on assembly lines with tiered oversight calibrated to defect impact. Cosmetic issues trigger automated documentation; structural defects trigger human review and production holds. The governance layer, not the AI alone, determines response protocols.

JPMorgan Chase’s contract intelligence system processes legal documents with governance controls that account for document type, regulatory jurisdiction, and decision impact. A routine vendor contract flows through automated processing; a regulatory agreement triggers additional legal review regardless of how the AI scores it.

Unilever demonstrated the value of contextual governance during COVID supply chain disruptions, when their predictive supply forecasting AI—trained on pre-pandemic patterns—required rapid contextual recalibration. Organizations with mature contextual validation loops made that adjustment in weeks; those without it struggled for months.

The Governance Challenges You Need to Solve for (Not Around)

Contextual governance isn’t a magic solution to AI’s hard problems. It’s a framework for managing them rigorously. The challenges don’t disappear—they become manageable.

Algorithmic Bias and Demographic Context

Bias in AI systems isn’t always detectable through aggregate accuracy metrics. A hiring algorithm might perform accurately on average while systematically underscoring candidates from specific demographic groups. Contextual governance addresses this by building demographic context into validation logic—not to create different accuracy standards, but to ensure that accuracy is measured across subgroups, not just overall populations.

The NIST AI Risk Management Framework provides guidance on bias evaluation that organizations can operationalize through contextual governance controls, requiring bias assessment as a standard component of risk tiering for employment and credit AI systems.

Data Privacy and Regulatory Alignment

GDPR and the EU AI Act create layered compliance obligations that interact in complex ways for AI systems. An AI system that technically complies with GDPR data minimization requirements might still generate outputs that constitute high-risk AI activity under the EU AI Act, triggering additional transparency and documentation obligations.

Contextual governance manages this complexity by maintaining a complete picture of each system’s regulatory exposure—not just which regulations apply, but how they interact and where conflicts require escalation.

Organizational Inertia and Governance Bureaucracy

The biggest practical obstacle to contextual governance isn’t technical—it’s organizational. Governance functions tend toward formalization: quarterly reviews, approval gates, sign-off requirements. These structures create lag between business context changes and governance response.

Effective contextual governance automates routine monitoring and validation while preserving human judgment for genuinely complex decisions. Automated systems handle drift detection, compliance scoring, and performance tracking. Humans handle policy decisions, exception management, and strategic interpretation. The goal is to remove bureaucratic friction from the governance processes that don’t require it, freeing governance capacity for the decisions that genuinely do.

Building Your AI Contextual Governance Infrastructure

Implementing contextual governance isn’t a single project—it’s a phased infrastructure build. Organizations that try to implement everything simultaneously typically create complexity without coherence. A staged approach delivers measurable value at each phase while building toward comprehensive contextual governance capability.

Phase 1: Inventory and Classification (Weeks 1-8)

Map your existing AI deployments systematically. For each system, capture: base model, training data sources, business function, data classification, current decision authority, and regulatory exposure. Use this inventory to identify gaps—AI systems operating without clear governance ownership, systems processing sensitive data without appropriate controls, deployments that predate current regulatory requirements.

This phase typically surfaces more AI activity than organizations expect. Shadow AI—unofficial deployments that haven’t gone through formal approval—is common and represents concentrated governance risk.

Phase 2: Risk Tiering and Contextual Rules (Weeks 4-16)

Using the inventory as a foundation, assign risk tiers to each AI deployment based on contextual factors: data sensitivity, decision impact, regulatory exposure, and human oversight requirements. For each tier, define the governance controls that apply: approval workflows, monitoring frequency, bias assessment requirements, audit documentation standards.

Build the contextual intake process that routes new AI deployments through appropriate governance paths based on their contextual profile. High-risk systems follow the slow lane with rigorous review; minimal-risk systems follow fast-track processes that don’t create unnecessary friction.

Phase 3: Continuous Monitoring and Validation (Weeks 12-24)

Implement real-time monitoring that connects model performance to business outcomes. This requires integration between AI monitoring platforms and business intelligence systems—a technical integration that most organizations haven’t built.

Tools like PromptLayer provide the observability infrastructure needed to track AI behavior in production: usage patterns, output distributions, drift indicators, and compliance signals. These operational feeds become the data source for contextual validation logic.

Phase 4: Strategic Visibility and Adaptive Learning (Ongoing)

Build the strategic dashboard layer that translates governance data into executive visibility. Define business-specific metrics that reflect AI’s actual impact: revenue attributable to AI recommendations, operational costs affected by AI-driven decisions, compliance risk exposure across the AI portfolio.

Implement the feedback loops that turn governance insights into model and policy refinements. This is the phase where governance transitions from reactive to predictive—where the system identifies contextual drift before it causes business impact rather than after.

The Organizations That Win Are the Ones That Govern Continuously

The McKinsey Global Institute projects that AI could contribute $13 trillion to global economic output—but the organizations capturing that value won’t necessarily be the ones with the most advanced models. They’ll be the ones whose governance infrastructure allows AI to remain aligned with evolving business reality across years of deployment, not just in the first months after launch.

AI contextual governance is that infrastructure. It’s what separates organizations that scale AI responsibly from those that accumulate misaligned, ungoverned deployments that create regulatory exposure, business risk, and eroded trust.

The competitive window for building this capability is open now. Early movers are establishing governance infrastructure that becomes a durable operational advantage. Those that wait are building technical debt that becomes harder to address as AI deployments multiply and regulatory requirements intensify.

Start with your AI inventory. Build your risk tiers. Connect your governance to your business outcomes. And treat contextual governance not as a compliance obligation but as the strategic operating system that makes AI evolution possible.

FAQs: Ai Contextual Governance Business Evolution Adaptation

What is AI contextual governance?

AI contextual governance is a dynamic oversight framework that calibrates governance controls to each AI system’s specific use case, risk profile, data sensitivity, and business context—rather than applying uniform policies across all AI deployments. Unlike static compliance frameworks, it adapts as business conditions, regulations, and AI capabilities evolve, maintaining alignment between AI behavior and organizational intent continuously rather than through periodic audits.

How does contextual governance differ from traditional AI governance?

Traditional AI governance applies consistent rules across all systems and validates compliance through periodic review cycles. Contextual governance applies risk-appropriate controls dynamically, validating not just technical compliance but business alignment in real time. The fundamental difference is that contextual governance treats business context as a live variable that governance policies must respond to, not a fixed baseline established at deployment.

What are the business benefits of AI contextual governance?

Organizations implementing contextual governance report faster adaptation to market changes (governance parameters update without full model retraining), stronger regulatory compliance (automated controls respond to regulatory requirements by jurisdiction and data type), reduced governance overhead (automated monitoring handles routine validation, freeing human capacity for strategic decisions), and better AI ROI (models stay aligned with current business objectives rather than drifting toward outdated targets).

How do you start implementing AI contextual governance?

Begin with a comprehensive AI inventory that captures contextual metadata for every deployment. Then establish risk tiers and the contextual rules that determine governance controls for each tier. Build continuous monitoring that connects model performance to business outcomes—not just technical accuracy metrics. Finally, create the strategic visibility layer that makes governance status accessible to executives and boards. Most organizations see meaningful governance improvement within 6 months of starting this process.

What are the biggest challenges in AI contextual governance?

The three most significant challenges are organizational inertia (governance processes tend toward formalization that can’t keep pace with business change), integration complexity (connecting AI monitoring systems to business intelligence platforms requires technical work most organizations haven’t prioritized), and contextual definition difficulty (translating business intent into measurable validation signals requires ongoing collaboration between business leaders and technical teams). Each is solvable, but each requires deliberate attention.

How does contextual governance address AI bias and fairness?

Contextual governance incorporates demographic context into validation logic, requiring that accuracy and fairness be assessed across population subgroups rather than only in aggregate. Risk tiering mandates bias assessment for high-risk systems—those affecting employment, credit, health, and law enforcement decisions—before deployment and on an ongoing basis. This doesn’t eliminate bias but creates systematic processes for detecting and addressing it as business context and model behavior evolve.

How does AI contextual governance scale with organizational growth?

Contextual governance is inherently modular—new AI deployments enter the governance framework through the contextual intake process and receive risk-appropriate controls automatically. As organizations expand into new markets or regulatory jurisdictions, governance parameters update to reflect new contextual requirements without requiring governance infrastructure to be rebuilt. The policy orchestration layer handles complexity through programmable, modular templates rather than manual case-by-case management.

For more on AI trends and regulatory developments shaping contextual governance: