Every millisecond counts. When a user hits your site after a deployment, a cache flush, or a CDN propagation event, the difference between a warm cache and a cold cache can mean hundreds of milliseconds of added latency enough to spike your bounce rate, tank your Core Web Vitals, and send conversion rates into a nosedive. Yet most engineering teams treat cache warmup as an afterthought, assuming traffic will eventually prime things on its own.

That assumption is costing them. Studies consistently show that a one-second delay in page response can reduce conversions by up to 7%, and Google’s own ranking signals now explicitly reward fast-loading experiences through Core Web Vitals. When your cache is cold, every incoming user becomes an unwitting test subject absorbing the full latency of an origin fetch while your infrastructure scrambles to populate edge nodes.

Warmup cache request fix this by flipping the equation. Instead of waiting for real users to prime your cache, you proactively drive controlled HTTP requests across your content delivery infrastructure before traffic arrives. The result: your edge nodes are pre-populated, your TTFB is minimized from the very first real request, and your users never experience the degraded performance of a cold cache state.

What Are Warmup Cache Request and Why Do They Matter?

A warmup cache request is an intentional, controlled HTTP request sent to a server or CDN edge node with the explicit purpose of populating the cache before real user traffic arrives. Rather than letting the first wave of users absorb the cost of cache misses where every request must travel all the way to the origin server a warmup process pre-seeds the cache with the responses your system will most commonly serve.

Think of it like preheating an oven before you put food in. You wouldn’t bake at a cold temperature and hope it works out you preheat so the environment is ready the moment it’s needed. Cache warmup applies the same logic to web infrastructure.

Warmup cache request matter for several interconnected reasons:

Eliminating Cold Cache Penalties

Without warmup, the first batch of users after any cache-clearing event (deployment, TTL expiry, purge, or failover) triggers a thundering herd of origin fetches. This floods your database and backend services at exactly the moment load is highest.

Protecting Core Web Vitals

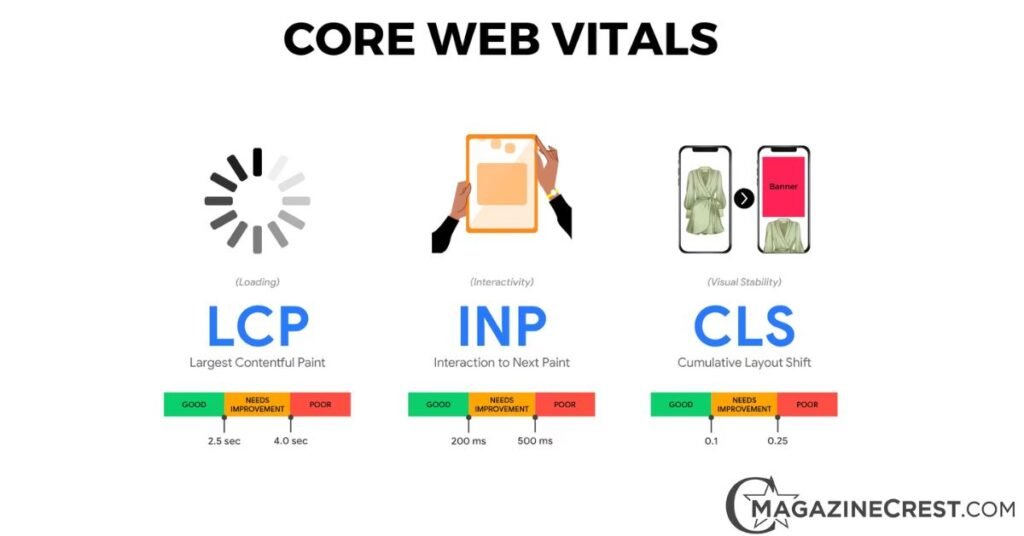

Metrics like Largest Contentful Paint (LCP) and Time to First Byte (TTFB) are directly impacted by whether content is served from cache or origin. A warm cache can cut TTFB from 300–800ms to under 50ms on well-optimized CDN infrastructure.

Supporting Predictable Performance Under Load

Performance-sensitive workloads e-commerce flash sales, media launches, SaaS application deployments require consistent response times. Cache warmup makes performance predictable modern.

Reducing Infrastructure Costs

Every cache miss is a paid origin fetch. At scale, warming your cache proactively can meaningfully reduce compute and bandwidth costs.

Studies across CDN performance benchmarks consistently show that proactive warmup strategies can achieve cache hit ratios above 90%, compared to ratios as low as 40–60% in the minutes immediately following a cold start event. The business case is clear.

How Warmup Cache Requests Work

At its core, a warmup cache request works by mimicking the HTTP request pattern that real users would generate but doing so in advance, under controlled conditions, targeting specific URLs across your content delivery infrastructure.

The mechanics vary depending on whether you’re warming a CDN layer, a reverse proxy like Nginx or Varnish Cache, an in-memory store like Redis, or an application-level cache.

The Role Of CDN And Edge Locations In Cache Warmup

Modern content delivery networks operate across hundreds of edge locations worldwide, distributed via anycast routing so that users are automatically directed to the nearest point of presence.

The challenge with CDN-level caching is that each edge node maintains its own independent cache store. A cache miss at one edge location does not benefit from a populated cache at another each node must independently fetch from origin and store the response.

This architecture means that a naive warmup that only targets a single endpoint will leave most of your edge network cold. Effective CDN-level warmup must account for geographic distribution, warming edge nodes in the regions where your traffic is concentrated.

Platforms like Cloudflare, Akamai, and Fastly provide APIs and tooling specifically for this purpose allowing you to trigger cache population across multiple edge locations simultaneously.

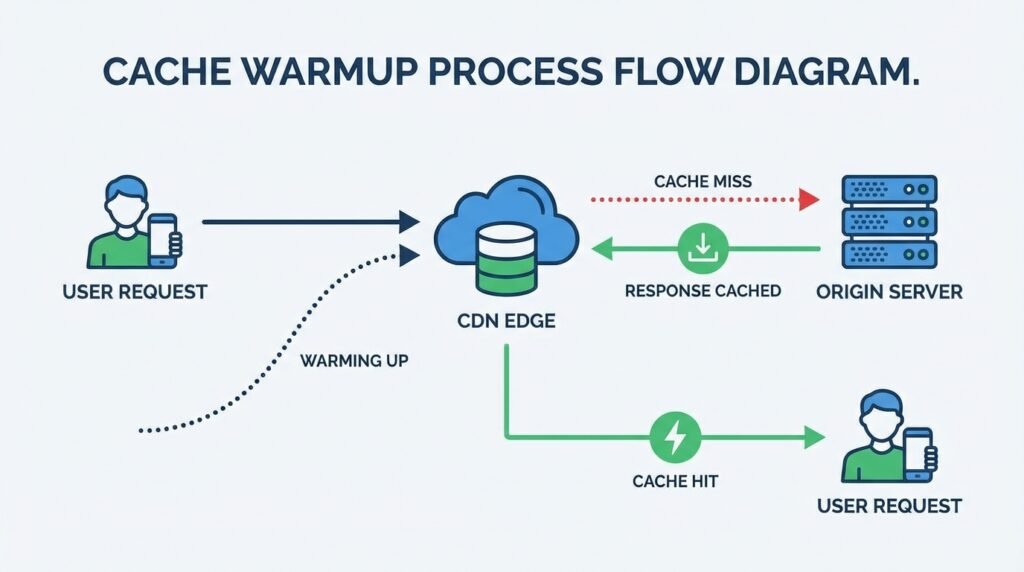

The warmup request flows like this: a script sends an HTTP GET to a target URL → the request reaches the nearest edge node → the edge node has no cached response (cache miss) → the request is forwarded to the origin server → the origin returns the response with appropriate cache-control headers → the edge node stores the response according to TTL → subsequent requests to that URL from real users are served directly from the edge (cache hit) with sub-millisecond delivery.

How Warmup Requests Populate Edge Cache

For a warmup request to successfully populate an edge cache, several conditions must be met:

- Correct cache-control headers: The origin response must include headers like Cache-Control: public, max-age=3600 or equivalent. Responses with no-store or private directives will not be cached regardless of warmup intent.

- Accurate URL selection: The request must target the exact canonical URL that real traffic will use, including query strings, path parameters, and protocol (HTTP vs HTTPS). A warmup to http://example.com/page does not warm https://example.com/page.

- Correct request headers: Some CDN configurations vary cache keys based on request headers (Accept-Encoding, Accept-Language, User-Agent). Warmup requests must replicate the header profiles of real user traffic to populate the correct cache variants.

- Sufficient request volume per node: Some CDN configurations require multiple requests before an object is considered “hot” and eligible for caching a threshold designed to prevent caching of rarely-accessed resources. Your warmup script may need to send 2–3 requests per URL per target edge location.

Manual Vs Automated Cache Warmup

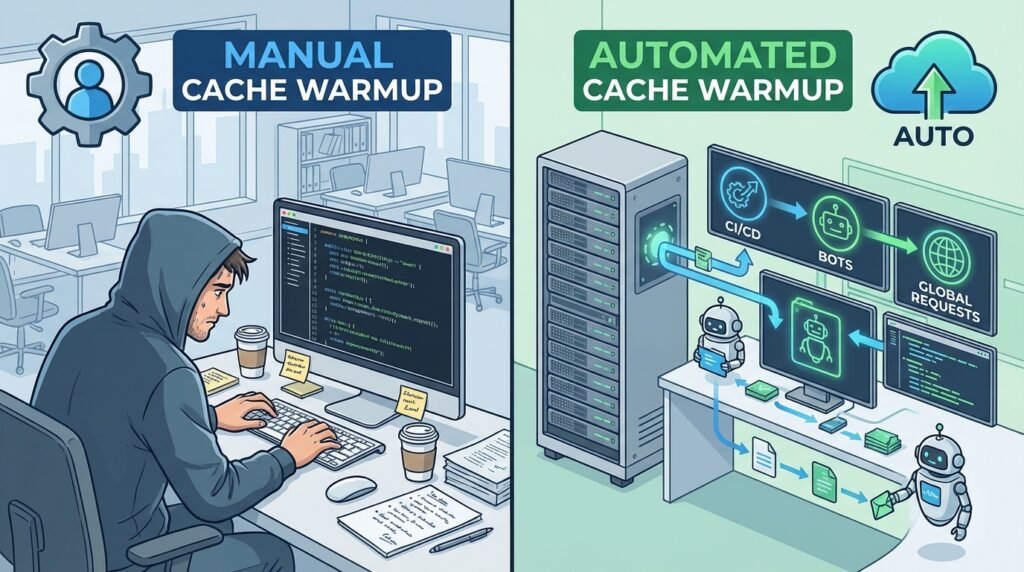

Manual warmup involves an engineer running a script or a series of curl commands against a target URL list following a deployment or cache invalidation event.

It works for small-scale applications with predictable content, but does not scale to large URL spaces, multi-region infrastructure, or continuous deployment workflows.

Automated warmup ties cache priming into CI/CD pipelines, deployment hooks, or scheduled jobs. When a deployment completes or a TTL expiry event fires, an automated warmup system takes the URL inventory often derived from a sitemap, server access logs, or an analytics export and dispatches concurrent HTTP requests across target edge locations.

Tools like OneUptime, custom Python scripts using ThreadPoolExecutor, and CDN-native warming APIs all support this approach.

Automated warmup systems are the standard for production-grade infrastructure handling performance-sensitive workloads. The investment in setup pays dividends in every subsequent deployment.

Why Cold Cache Is A Serious Performance Problem

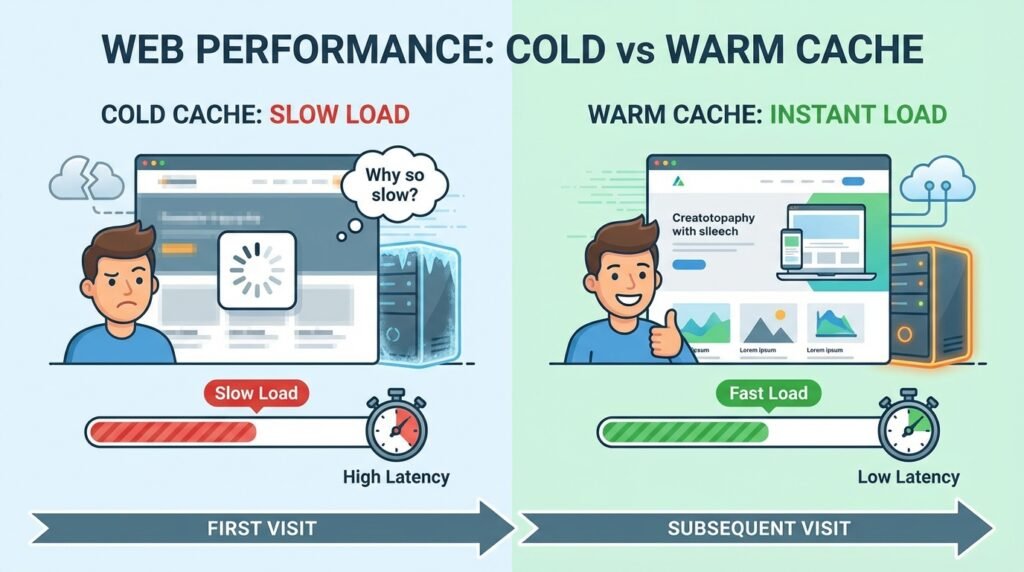

A cold cache is a cache store that has been recently cleared, expired, or never populated meaning that incoming requests cannot be served from cache and must instead be routed to the origin server.

Cold cache states are not edge cases; they happen constantly in production environments:

- After every deployment that triggers a cache purge

- When TTL values expire across an edge network simultaneously

- After a CDN failover or configuration change

- During initial launch or regional expansion

- Following a DDoS mitigation event that flushes cache stores

The impact of a cold cache compounds with traffic volume. During normal traffic, a few cache misses per second impose manageable load on origin.

But when a cold cache coincides with a traffic spike a product launch, a viral post, a scheduled event the thundering herd effect can be catastrophic.

Every user arriving in the same window generates an origin fetch, overwhelming backend services that were sized for cache-hit traffic patterns.

Netflix’s engineering team has publicly documented cache warming as a core infrastructure discipline in their distributed CDN architecture, where the difference between warm and cold edge delivery states represents meaningful degradation in subscriber experience at scale.

For most web applications, the impact is less dramatic but still significant: a cold cache window of 5–10 minutes following each deployment means your most engaged users those who arrive immediately after launch consistently receive the worst performance your infrastructure can deliver.

Cold Cache vs Warm Cache: Briefly

The performance difference between a cold and warm cache is not subtle it affects every layer of your stack simultaneously, from the user’s perceived load time to your infrastructure’s cost per request.

The table below captures what actually changes when warmup cache request do their job.

| Attribute | Cold Cache | Warm Cache |

| TTFB | 300–1,500ms full origin fetch | Under 50ms edge delivery |

| Page Load | Blank screen, cascading delays | Instant first paint |

| Core Web Vitals | LCP, TTFB, INP all degrade | Consistently within Google’s “good” thresholds |

| Backend Load | 5–20x spike after deployment | Origin shielded, minimal load |

| Performance Variance | Non-deterministic, unstable | Predictable across sessions |

| User Behavior | Higher bounce, lower conversions | Lower bounce, stronger engagement |

| SEO Signal | Negative crawlers hit cold responses | Positive fast, stable crawl experience |

| Infrastructure Cost | Higher compute and DB spend | Reduced fewer origin fetches |

Research by Google and Deloitte (Think with Google) found that a 0.1-second improvement in mobile load time increases conversions by up to 8% for retail sites.

The reverse holds equally true: cold cache windows immediately following deployments are precisely when your highest-intent users those who arrive right after a launch announcement absorb your worst possible performance.

Key Benefits of Warmup Cache Request

The case for warmup cache requests extends across performance, reliability, cost, and user experience dimensions:

Eliminated Cold Start Latency

By pre-populating cache before any real user arrives, warmup requests eliminate the latency penalty that would otherwise be absorbed by the first wave of traffic.

Higher Cache Hit Ratios From The Start

Rather than waiting for organic traffic to gradually warm the cache a process that can take minutes to hours for large URL spaces warmup scripts achieve high cache hit ratios immediately after deployment.

Protected SEO performance

Search engine crawlers encounter your application at unpredictable times, often during or immediately after a deployment.

A warm cache ensures Googlebot receives fast, correctly-rendered responses that support accurate indexing and positive Core Web Vitals signals.

Reduced Origin Infrastructure Pressure

With edge delivery eliminating origin fetches before real user load arrives, your backend services operate within their design parameters rather than absorbing cold-start spikes.

Consistent Performance Testing

Load tests and performance benchmarks run against a warm cache produce results that reflect steady-state production behavior, rather than cold cache artifacts.

Improved Developer Confidence

Deployment pipelines that include automated warmup steps give engineering teams confidence that each release ships with performance parity to the previous version.

Cache Types That Benefit Most from Warmup

Not all cache layers benefit equally from warmup. Understanding which cache types to prioritize is key to efficient warmup strategy.

HTML and Static Page Cache

HTML responses are typically the highest-priority warmup target. They are the entry point for all subsequent resource loading, they directly determine TTFB and LCP, and they are the assets most likely to be served to users immediately following a deployment.

Warming your top 100–1,000 URLs derived from analytics should be the first step in any warmup implementation.

Image and Media Cache

Images frequently constitute 60–70% of a page’s total weight and are the primary driver of LCP on content-heavy pages. Image variant pre-caching warming multiple responsive image sizes per asset ensures that users on all device types receive cached image responses.

CDN platforms that perform on-the-fly image resizing benefit from warmup that targets the specific dimension/format combinations your responsive web design breakpoints generate.

Related: The Complete Guide to Image Search Techniques

Dynamic and API Content

API responses that are cacheable (read-only data that changes infrequently, properly configured with public cache-control headers) are strong warmup candidates.

Product listings, category pages, navigation trees, and configuration endpoints are common examples.

Cache-aside patterns for Redis or Aerospike where the application checks cache before querying the database benefit significantly from warmup that pre-seeds the key-value store before traffic arrives.

Edge-Level Distributed Cache

Distributed edge caches across CDN edge locations present the most complex warmup challenge because each node must be independently populated.

Tools and APIs from Cloudflare, Akamai, and Fastly support geo-aware warmup targeting specific PoPs based on expected traffic origin distribution.

Multi-cloud load balancing environments require warmup scripts that account for the routing rules of each CDN provider in the stack.

Common Methods for Warmup Cache Request

Script-Based Warmup

The most direct approach to cache warmup is a script that reads a URL list and dispatches concurrent HTTP GET requests. In Python, the ThreadPoolExecutor pattern allows high-concurrency warmup across large URL spaces:

python

import concurrent.futures

import requests

urls = open(“urls.txt”).read().splitlines()

def warm_url(url):

try:

r = requests.get(url, timeout=10, headers={“User-Agent”: “CacheWarmer/1.0”})

return (url, r.status_code, r.elapsed.total_seconds())

except Exception as e:

return (url, “error”, str(e))

with concurrent.futures.ThreadPoolExecutor(max_workers=20) as executor:

results = list(executor.map(warm_url, urls))

for url, status, elapsed in results:

print(f”{status} | {elapsed:.3f}s | {url}”)

This concurrent warmup pattern is the foundation of most production implementations. Key parameters worker concurrency, request timeout, and per-domain rate limits should be tuned to your infrastructure’s capacity envelope to avoid overloading the origin during the warmup pass.

For Redis-based application caches, a warmup script using pipeline and batch operations (pipeline(), setex, hash tags) can pre-seed key-value pairs before the application receives traffic.

Reading from a snapshot of the previous cache state or generating data from the application’s own seeding logic is the cleanest approach for stateful in-memory caches.

Traffic Simulation

Headless browsers (Playwright, Puppeteer) can simulate realistic user traffic patterns, triggering not just HTML fetches but the full resource waterfall CSS, JS, fonts, images that a real browser load generates.

This approach warms cache at every layer simultaneously and is particularly effective for single-page applications where JS bundles must be loaded to trigger API calls.

Traffic simulation is more resource-intensive than simple curl-based warmup but produces a more complete cache population, particularly for dynamically composed pages where the full resource inventory is only known at render time.

Log-Driven Intelligent Warmup

Log-driven warmup uses server access logs, CDN request logs, or analytics data to identify the specific URLs, query parameters, and request header profiles that real traffic generates.

Rather than warming a static sitemap, log-driven warmup targets the actual URL space your users traverse including paginated results, search query responses, and user-specific (but cacheable) content.

Log-driven warmup extends naturally into predictive scheduling: by applying time-series analysis to access logs, you can identify which URLs spike at specific times (morning news peaks, lunchtime shopping traffic, event-driven surges) and schedule warmup to complete before those windows open.

Tying warmup execution to both the deployment pipeline and the calendar gives you coverage against both planned and time-predictable traffic events.

Best Practices for Effective Warmup Cache Request

Build URL Inventories From Real Data

Use analytics exports, sitemaps, and access logs to construct the URL list for warmup.

Static sitemaps underrepresent dynamically generated pages; access logs capture actual user URL patterns including long-tail queries.

Prioritize By Traffic Weight

Not all URLs warrant equal warmup priority. Sort your URL list by pageview volume and warm the top-N URLs first, with lower-priority content warmed in a background pass.

Respect Rate Limits

Warmup scripts that slam the backend defeat their own purpose. Implement concurrency controls, per-domain rate limiting, and request pacing to ensure warmup traffic stays within the capacity envelope your infrastructure can absorb without origin overload.

Validate Cache-Control Headers Before Warmup

Run a pre-warmup audit to confirm that target URLs return cacheable responses. Warming URLs with no-store or private headers is wasted effort.

Include Warmup In CI/CD

Tie warmup execution to the deployment completion event in your CI/CD pipeline.

An automated warmup step triggered by a post-deploy webhook ensures warmup runs automatically with every release, not just when an engineer remembers to trigger it.

Use Request Headers That Match Real Traffic

Replicate Accept-Encoding, Accept-Language, and User-Agent profiles that your CDN uses as cache key components.

Mismatched headers create a parallel, non-shared cache partition that real users never benefit from.

Monitor Warmup Outcomes

Track cache hit ratios and TTFB before and after warmup runs. A warmup that executes without improving metrics indicates a configuration problem incorrect headers, cache key mismatch, or TTL values too short to survive the warmup-to-traffic window.

How to Monitor Warmup Cache Effectiveness

Monitoring is what separates professional cache warmup implementations from guesswork.

Without measurement, you cannot validate that your warmup is actually working or identify which URLs remain cold after a warmup pass.

Cache Hit Ratio Analysis

The cache hit ratio the percentage of requests served from cache vs. forwarded to origin is the primary metric for warmup effectiveness.

Tools: CDN dashboards (Cloudflare Analytics, Akamai mPulse, Fastly Observability), Redis INFO stats (keyspace_hits / keyspace_misses), Nginx access log analysis, and Varnish Cache’s varnishstat utility.

A warmup run that lifts your cache hit ratio from 20% to 85%+ in the first minute of traffic is a success.

A ratio that remains below 60% after warmup indicates incomplete URL coverage, header mismatches, or TTL values that expired before traffic arrived.

Performance and Latency Metrics

Measure TTFB and LCP before and after warmup runs using synthetic monitoring (Pingdom, Catchpoint, or your own headless browser probes).

Instrument your CDN and origin with distributed tracing (OpenTelemetry, Datadog APM) to capture the cache hit/miss flag per request and correlate it with latency outcomes.

A well-instrumented warmup script captures response latency and HTTP status codes during execution itself flagging URLs that return errors, unexpectedly slow origin responses, or non-cacheable status codes (301 redirects, 404s, 500s) that should be excluded from the warmup inventory.

Availability and Health Checks

Warmup scripts should verify that warmed endpoints are returning correct HTTP status codes (200, 304 where appropriate) and not error responses.

A monitoring dashboard that tracks status code distribution, P95 response latency, and cache-hit confirmation headers (CF-Cache-Status: HIT, X-Cache: HIT) gives operators real-time visibility into warmup coverage.

Common Challenges in Cache Warmup

URL Space Explosion

Applications with millions of URLs (e-commerce product catalogs, news archives, user-generated content) cannot be fully warmed in finite time.

The solution is priority-based warmup covering the high-traffic URL tier completely, with diminishing coverage as traffic importance decreases.

Cache Key Complexity

CDN configurations that vary cache keys by device type, geolocation, authentication state, or cookie values multiply the effective URL space that must be warmed.

Each unique cache key variant requires its own warmup request.

Short TTL Values

Content with TTL values shorter than the warmup-to-traffic interval will expire before users arrive.

Either extend TTLs for critical content during deployment windows or schedule warmup to complete as close to traffic arrival as possible.

Thundering Herd Risk From Warmup Itself

A poorly configured warmup script that dispatches thousands of concurrent origin requests can generate the same backend overload it was designed to prevent. Concurrency limits and request pacing are non-negotiable.

Dynamic Content

Personalized or session-dependent content cannot be cached and warmed in the traditional sense.

The solution is to identify the cacheable segments of dynamic pages (the “shell” of an SPA, the navigation tree, the product data) and warm those components independently, accepting that personalized content will always require origin fetches.

Geo-Distribution Gaps

Warming only the edge node closest to your warmup script’s origin leaves most of the global CDN cold.

Effective multi-region warmup requires either CDN-native geo-aware APIs or warmup infrastructure deployed across multiple cloud regions.

Things to Avoid

Warming Everything by Default

Indiscriminate warmup attempting to pre-warm every URL in your application regardless of traffic priority is a common and costly mistake.

For large applications with millions of URLs, exhaustive warming is computationally infeasible and wastes origin capacity on content that will never be requested by real users. Focus warmup investment on the top-N URLs by traffic volume.

One-time Warms with No Updates

Cache warmup is not a one-time operation. Content changes, TTLs expire, and deployments invalidate cached responses continuously.

A warmup implementation without scheduled re-warming or event-driven update triggers will gradually diverge from the actual content your users need, providing false confidence that the cache is warm when it has in fact gone cold.

Not Measuring Effectiveness

Warmup without measurement is theater. If you are not tracking cache hit ratios, TTFB before and after warmup runs, and the fraction of your URL inventory successfully warmed, you have no evidence that your warmup is working and no early warning when a configuration change breaks it.

Warmups that Slam the Backend

High-concurrency warmup scripts without rate limiting defeat their own purpose: they generate the same thundering herd of origin requests they were designed to prevent.

Always configure concurrency limits, per-second request caps, and request pacing in warmup scripts.

A warmup that takes 5 minutes to complete safely is far preferable to one that takes 30 seconds but crashes your origin.

Security Considerations for Warmup Cache Request

Cache warmup automation introduces attack surface that must be explicitly addressed.

Preventing Abuse of Warmup Mechanisms

Warmup endpoints that accept URLs as parameters a common pattern for on-demand warming are vulnerable to cache poisoning if input is not strictly validated.

An attacker who can inject a malicious URL into a warmup job can force the cache to store a response from an attacker-controlled origin.

Mitigate this by maintaining an allow-list of warmable URL patterns and validating all inputs against it before dispatching requests.

Web application firewall (WAF) rules should be configured to flag anomalous patterns consistent with warmup abuse unusually high request rates to cache-related endpoints from unexpected IP ranges, or requests with unusual header combinations.

Securing Warmup Automation

Warmup scripts that run as part of CI/CD pipelines should use short-lived credentials (OIDC tokens, IAM roles with time-bounded policies) rather than long-lived API keys.

Secrets management platforms (HashiCorp Vault, AWS Secrets Manager) should store any CDN API keys used for geo-aware warmup calls.

IP allowlist restrictions on warmup endpoints limiting the IPs that can trigger cache population prevent external abuse while preserving automation functionality.

In Kubernetes environments, network policies can restrict warmup pod egress to the CDN API endpoints they legitimately need to reach.

Coordinated Firewall Controls

DDoS protection platforms (Cloudflare, Akamai Kona Site Defender) may classify a warmup script’s high-request-rate traffic as attack traffic and block it.

Configure your firewall rules to recognize warmup traffic by User-Agent string or source IP range and apply appropriate rate limits rather than hard blocks.

Coordinate with your CDN provider’s support team before large-scale warmup runs to ensure monitoring alerts are not triggered incorrectly.

Advanced Cache Warmup Techniques

Production-grade warmup implementations go beyond simple URL lists and curl loops.

The following patterns represent the current state of the art for high-scale, high-reliability cache warming.

Event-Driven Warmup

A warmup subscriber attached to a message queue (Kafka, SQS, Redis pubsub) triggers targeted cache invalidation and re-warming whenever a change event is published a product update, a content publish, a price change.

Rather than warming everything on a schedule, the system warms precisely the cache keys affected by each change.

This pattern minimizes unnecessary origin load while maintaining tight freshness guarantees.

Change Data Capture (CDC) Integration

In database-backed caches (Redis, Aerospike), CDC streaming (Debezium, AWS DMS) can detect row-level changes in the source database and trigger cache updates in real time.

The cache is never cold because updates flow directly from the database change stream, bypassing the TTL expiry cycle entirely.

Deployment-Time Warmup with Blue/Green Traffic Shifting

In blue/green deployment architectures, warmup runs against the green environment before any traffic is shifted.

An automated warmup step validates that cache hit ratios on the green origin have reached a configured threshold typically 85% and only then signals the load balancer to begin traffic migration.

If warmup fails the threshold check, the deployment is paused for investigation.

Pipeline-Based Warmup for Redis Clusters

For applications using Redis Cluster for application-level caching, pipeline operations (pipeline(), execute()) dramatically increase warmup throughput by batching multiple setex commands in a single network round-trip.

Using sorted sets and hash tags for consistent key distribution, this approach can warm millions of keys per minute on modern Redis infrastructure.

Predictive Warmup Based on User Behavior

The most sophisticated warmup implementations use behavioral data to warm the cache before users explicitly request content anticipating demand based on historical patterns and real-time signals.

Prefetch Link Analysis

By analyzing which URLs are linked from the current page, a predictive warmup system can pre-warm the most likely next-page destinations before the user navigates.

This is the server-side equivalent of hints, but operating at the CDN layer.

A/B Test Segment Awareness

When multiple variants of a page are served to different user segments, warmup must cover all active variants.

A predictive warmup script that reads active A/B test configurations from an experiment management system can ensure all variants are warmed without manual URL management.

Geo-Aware Edge Warmup

Traffic to a global CDN is not uniformly distributed. A geo-aware warmup strategy targets edge nodes in proportion to their expected traffic share concentrating warmup effort on the PoPs serving your largest user geographies while still covering lower-traffic regions with a reduced warmup pass.

CDN providers expose PoP-level cache population APIs that allow warmup scripts to target specific edge locations directly.

Combined with a geographic traffic analysis from your CDN’s analytics, this produces a warmup strategy calibrated to actual demand distribution rather than geographic completeness for its own sake.

Image Variant Pre-Caching

Modern responsive design generates multiple image variants per asset different resolutions for different device classes, different formats (WebP, AVIF, JPEG) for different browser capabilities, and different aspect ratios for different layout contexts.

A naive warmup that requests only the canonical image URL warms only one variant, leaving all others cold.

Image variant pre-caching maps each source image to its full set of derivative variants and warms each combination.

For a product catalog with 10,000 images and 5 size/format combinations each, this represents 50,000 warmup requests but with 20 concurrent workers and average 200ms origin response times, the entire pass completes in roughly 2–3 minutes. Manageable, but it requires explicit planning rather than a naive single-URL warmup.

When Warmup Cache Requests Are Essential

Cache warmup is not optional infrastructure in the following scenarios:

Major Deployments With Cache Purges

Any deployment that includes a full cache invalidation version-based cache busting, cache key migration, or forced purge following a security incident requires warmup to prevent cold cache exposure to real users.

High Traffic Launches And Events

Product launches, sale events, content premieres, and any planned traffic spike require warmup as a prerequisite. The traffic arrives in a compressed window; there is no time for organic warming.

Multi-Region Or CDN Migrations

Migrating from one CDN provider to another, or expanding to new geographic regions, starts with a completely cold cache across the new infrastructure.

Warmup is the mechanism for achieving cache readiness before traffic is cut over.

Serverless And Edge Function Environments

Cold start problems in serverless environments (AWS Lambda, Cloudflare Workers, Vercel Edge) are compounded by cold cache states.

Warmup that triggers function invocation and populates the associated edge cache simultaneously addresses both problems.

Performance-Regression-Sensitive Applications

Applications subject to SLA guarantees or strong competitive performance expectations cannot afford the random latency variance of a cold cache window. Warmup is the mechanism for enforcing performance floors.

How Warmup Cache Improves User Experience

The UX impact of cache warmup is direct and measurable. When warmup is implemented correctly:

- First-visit users receive production-quality performance, not the degraded experience of a cache miss chain

- Perceived reliability increases because performance is consistent across the day, not spiking after deployments

- LCP and TTFB targets are met from the first request, not only after organic traffic has primed the cache

- Pages render without FOUT because stylesheets and fonts are delivered from cache simultaneously with HTML

- API-driven interfaces load instantly because JSON responses for common queries are already in the edge cache

- Mobile users benefit disproportionately because the latency savings from edge delivery are largest on high-latency mobile connections

The cumulative effect on behavioral metrics bounce rate, session duration, conversion rate, return visit frequency is well-documented in performance engineering literature.

Cache warmup is one of the highest-ROI performance optimizations available because it improves the experience of every user during every deployment window, without requiring any changes to the application code itself.

Cache Warming in Serverless & Edge Environments

Serverless architectures introduce a specific variant of the cold cache problem: the cold start. When a Lambda function, Cloudflare Worker, or Vercel Edge function is invoked for the first time (or after a period of inactivity), it must initialize its runtime, load its code bundle, and establish any necessary connections before it can serve a response.

This initialization latency ranging from tens to hundreds of milliseconds depending on runtime and bundle size adds to the response time experienced by the triggering user.

Cache warming in serverless environments addresses both the function cold start and the edge cache cold state simultaneously. A warmup request that triggers a function invocation:

- Forces the platform to initialize a warm function instance

- Executes the function’s cache-population logic (fetching from database, calling APIs)

- Populates the edge cache with the function’s response

- Ensures subsequent requests hit a warm function and a warm edge cache

Scheduled warmup pings lightweight HTTP requests dispatched every few minutes to keep function instances warm are a common pattern for latency-sensitive serverless functions.

AWS EventBridge, Cloudflare Cron Triggers, and Vercel’s built-in cron functionality all support this pattern.

For Redis-backed serverless functions, ensuring the in-memory cache is pre-populated before function invocations begin is achievable through an automated warmup step in the serverless deployment pipeline one that connects to the Redis endpoint and seeds the expected key space before the first function invocation.

Cache Warming vs Cache Prefetching

These terms are often confused, but they address different problems:

| Dimension | Cache Warming | Cache Prefetching |

| Trigger | Deployment, schedule, or event | User action or navigation signal |

| Timing | Before user traffic arrives | During an active user session |

| Target | Full URL inventory | Predicted next-page resources |

| Mechanism | Server-side HTTP requests | Browser hints () or server push |

| Scope | Infrastructure-wide | Per-user session |

| Primary benefit | Eliminate cold cache at deployment | Reduce perceived latency for navigation |

Cache warming is an infrastructure operation. It happens before users arrive and is orchestrated by backend systems.

Cache prefetching is a client- or server-side optimization that predicts and pre-loads resources a specific user is likely to need next, based on their current navigation context.

Both techniques are complementary and should be used together. Cache warming ensures the infrastructure is ready; cache prefetching optimizes the individual user’s journey through your application.

FAQs

How Long Does It Take To Warm Up The Cache?

Warmup duration depends on the size of your URL inventory, your concurrency settings, and origin response latency. For a well-configured script with 20 concurrent workers, warming 1,000 URLs with average 200ms origin response times takes approximately 10–15 seconds. Large URL spaces (100,000+ URLs) may require minutes or a phased warmup strategy prioritizing high-traffic content. CDN geo-aware warming that targets multiple edge locations multiplies this estimate by the number of distinct regions being warmed.

What Is The Cache Warm-Up Strategy?

A cache warm-up strategy is the combination of URL selection logic, request execution approach, timing, and monitoring that governs how you pre-populate your cache before user traffic arrives. Effective strategies incorporate traffic-weighted URL prioritization, automated execution tied to deployment and TTL events, request header replication for correct cache key targeting, rate limiting to protect origin, and post-warmup validation against cache hit ratio and latency metrics.

Are POST Requests Cacheable By Default?

No. POST requests are not cacheable by default under the HTTP specification, and most CDNs and reverse proxies will not cache POST responses unless explicitly configured to do so. Cache warmup typically targets GET requests, which are cacheable when the response includes appropriate cache-control headers. If your application uses POST for read operations (a GraphQL pattern), you may need CDN-specific configuration to enable POST response caching, after which warmup of those endpoints becomes possible.

Is Manual Or Automated Cache Warmup Better?

Automated cache warmup is superior for production environments in virtually every case. Manual warmup depends on human memory and execution, fails to account for the full URL inventory, and cannot operate at the speed and scale required after CI/CD deployments. Automated warmup tied to deployment hooks, cron schedules, and cache invalidation events provides consistent, comprehensive coverage without operator intervention. Manual warmup is appropriate only for small-scale applications or one-off events where automation overhead is not justified.

What Are The Risks Of Skipping Cache Warm Up In High Traffic Or Serverless Environments?

Skipping warmup in high-traffic environments exposes you to thundering herd effects, where a traffic spike coinciding with a cold cache overwhelms origin servers. In serverless environments, the compound effect of function cold starts and edge cache misses can produce latency spikes of 1,000ms+ for the first requests after deployment. For applications with SLA commitments, planned traffic events, or revenue-sensitive performance metrics, skipping warmup is a reliability risk with direct cost implications.

Is Cache Warmup Useful For Dynamic Content?

Cache warmup is most effective for static or semi-static content: HTML pages, images, CSS/JS bundles, and API responses that change infrequently and are served with public cache headers. Fully dynamic, personalized content (user-specific dashboards, real-time data feeds) cannot be pre-warmed in the traditional sense because the cache key includes user-specific identifiers. However, the cacheable structural components of dynamic pages (navigation, product data, configuration endpoints) can and should be warmed even when the full page response is not cacheable.

Does Cache Warmup Affect CDN Or Edge Server Performance?

Warmup requests consume CDN edge capacity during the warmup window, but the load is minimal compared to real traffic. Well-configured warmup scripts pace their requests to stay within CDN rate limits. The outcome of warmup high cache hit ratios reduces the total request load on CDN edge servers during real traffic because cache hits require less computation than cache misses that must be proxied to origin.

Can Warming The Cache Improve Website Load Times For Global Users?

Yes, particularly when geo-aware edge warmup is implemented. Without warmup, users in regions distant from your origin server experience the full round-trip latency of an origin fetch on their first request. With warmup that targets CDN edge nodes in those regions, the response is served locally, dramatically reducing latency. For a globally distributed application, geo-aware warmup is one of the highest-impact performance optimizations available to users in emerging markets and regions far from primary data centers.